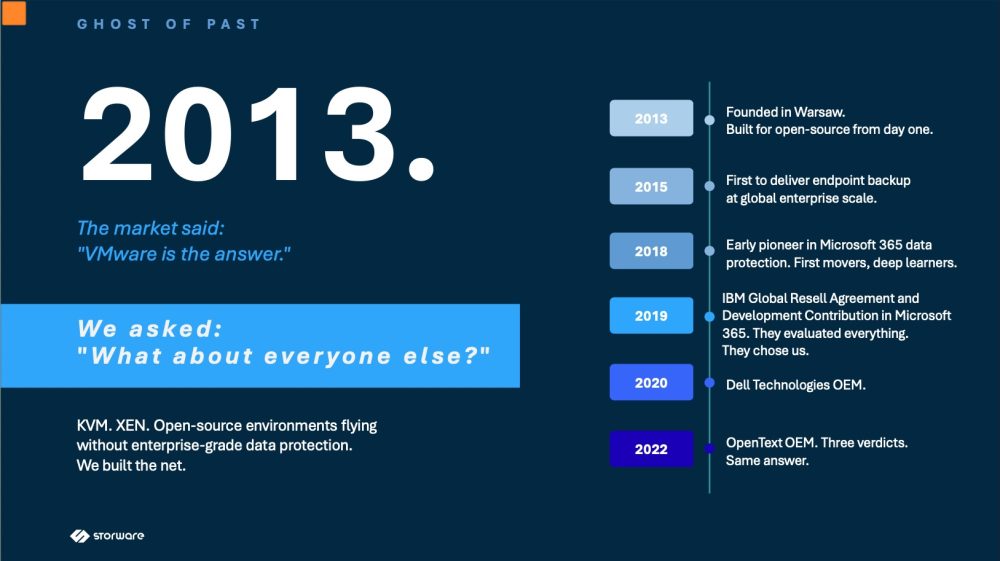

Storware is a Warsaw-based data protection company founded in 2013 with a clear early-mover thesis: the market was too VMware-centric, and open-source environments like KVM and XEN were operating without enterprise-grade backup solutions. Over the following decade, the company built a strong track record of firsts and strategic partnerships. Key milestones include delivering endpoint backup at global enterprise scale in 2015, pioneering Microsoft 365 data protection in 2018, securing a Global Resell Agreement with IBM in 2019, being selected by Dell Technologies for co-engineered appliance solutions in 2020, and becoming the data protection layer for OpenText's enterprise content management platform in 2022. A fourth major OEM partnership is teased for 2026, yet to be announced.

The market has since validated every bet Storware made. The Broadcom acquisition of VMware triggered licensing increases of up to 1,000%, forcing 300,000 enterprise customers to rethink their infrastructure strategies — exactly the migration wave Storware had anticipated. Ransomware remains a persistent threat with an average breach cost of $4.88M, and European compliance mandates such as NIS2 and GDPR are now fully enforced, further driving demand for immutable, auditable backup solutions.

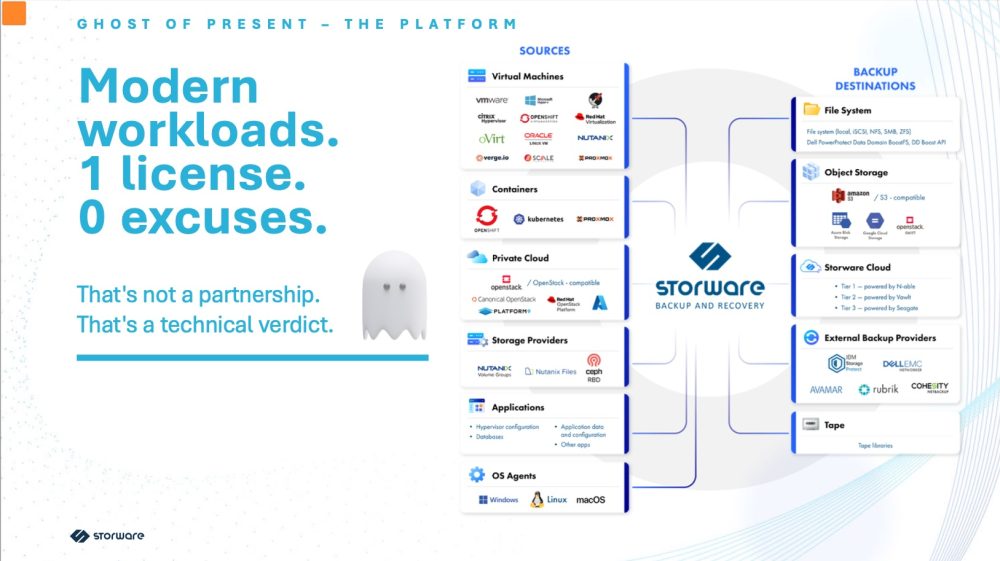

The Storware platform supports a remarkably broad range of environments under a single license, covering virtual machines across VMware, Hyper-V, Nutanix AHV, Proxmox, OpenShift and more; containers via Kubernetes; private cloud including all major OpenStack distributions; storage providers such as Nutanix and Ceph RBD; applications and databases; and OS agents for Windows, Linux and macOS. Backup destinations span local file systems, S3-compatible object storage, the Storware Cloud, and external providers including IBM, Dell EMC, Rubrik and Cohesity, as well as tape libraries. Security features are substantive and vendor-independent, including IsoLayer Air-Gap isolation, immutable write-once backups, end-to-end encryption, MFA and RBAC, Keycloak SSO integration, and full audit trails for NIS2 and GDPR compliance. The platform is available in three deployment modes: software-only, a hardware and software NVMe appliance ranging from 10TB to 100TB raw capacity, and a fully managed SaaS offering. The company counts over 4,000 customers across 150 countries, with notable names including Disney, Cisco, Lenovo and Garmin.

Looking ahead, Storware's roadmap centers on the concept of data gravity, using its existing backup infrastructure as a migration engine. Immediate capabilities include VMware-to-OpenStack and Citrix-to-OpenStack migration paths, while coming developments include OpenStack Replication DR with RPO measured in seconds and a next-generation NVMe backup appliance. The company positions itself around four core strengths: its post-Broadcom alternative built before the crisis, a decade of production-grade open-source expertise, European data sovereignty by design, and a transparent licensing model as a direct antidote to VMware-style pricing shocks.